Growing up in and through the 80s and being an avid gamer, ‘bits’ was the buzzword to use. You’d just throw it into random gaming conversations, even if you really didn’t know what you were talking about, in an attempt to sound like you knew what you were talking about. Bits, they were everywhere for a couple of decades.

This site’s name even comes from the whole bits thing too. Little Bits of Gaming (as was the site name before I added moves to the mix) came about for a couple of reasons. First was due to the fact that I originally wrote smaller articles that you could read in a couple of minutes about gaming. So the main aim of this blog was to provide ‘little bits of gaming’. The second reason was the whole bits connection to gaming.

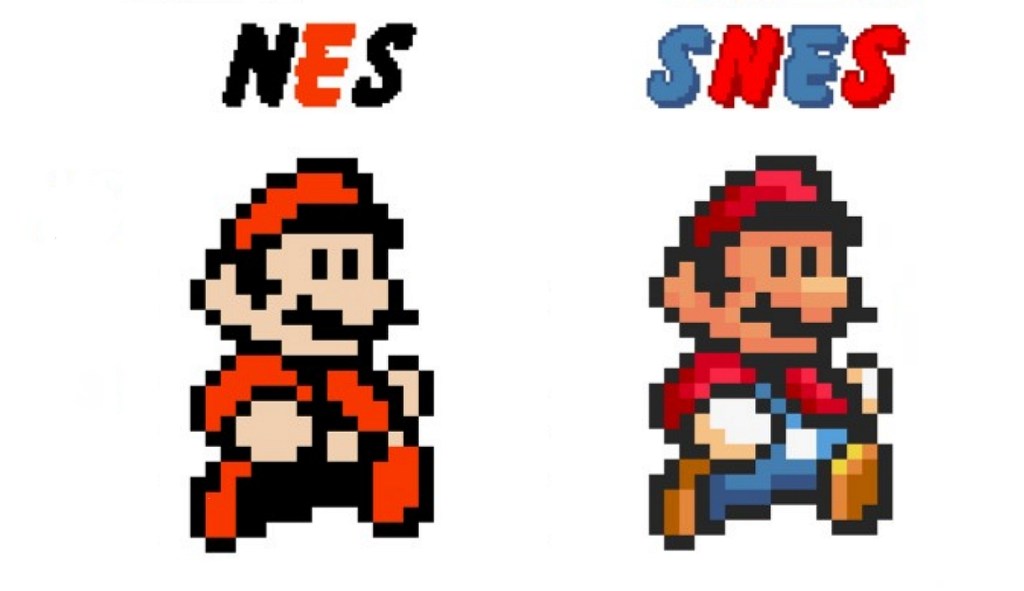

I’m sure younger readers will have no idea what I’m talking about right now as bits are just not used anymore, unless you are talking retro gaming. Truth be told, I’m not even sure how or why the whole bits thing began, it seems to stem from the third generation of gaming. From that early to the mid-80s era when Nintendo launched their Famicom/NES and Sega had their Master System 8-bit consoles. But then again, I think the whole 8-bit thing was perhaps retroactively created once the more powerful 16-bit machines hit the market, as a way to differentiate between the two generations. Sega even had ’16-bit’ proudly displayed on their Mega Drive console. See, we didn’t really use ‘gaming generations’ as a phrase back then, or at least I certainly didn’t. We had 8-bit and 16-bit machines instead.

Now, I actually grew up with computers and not consoles. We had a Commodore 64 in the mid-80s when Nintendo and Sega were doing their thing. I had friends who owned gaming consoles, so I did get to play on them. But it was computers and not consoles that I grew up with, in our house as a kid. Even then, we never once referred to our C64 as an 8-bit computer ever. But now? Now the C64 is very much considered an 8-bit machine… even if it wasn’t at the time. In fact, our first ever gaming machine was an Atari 2600, which very much was a console. But it never had bits attached to it. The 2600 is considered to be part of the second generation of gaming. So would that have made it 4-bit as it was the previous generation before the NES and Master System 8-bit machines? Which would then make the first generation of gaming the 2-bit era… Right? But we never called them that, they were just things we could play games on, bits never came into it.

Anyway, after that 8-bit age of gaming, along came the likes of the PC Engine/TurboGrafx-16, Super Famicom/Super NES and the Mega Drive/Genesis 16-bit era. With a few early contenders for a 16 and 32-bit mix thrown in too (Neo Geo). The numbered bits thing really was just a way to highlight that a machine was more powerful. About twice as powerful in fact, hence the doubling of the numbers from 8 to 16-bit. But here’s a question, what the hell was a ‘bit’? As far as I can tell, a ‘bit’ is the limit on how many colours the could be displayed, the resolution of the graphics and the basic processing power. I may be wrong, but that is about the best and simplest explanation I can find. So basically, the more bits, the better the game looked.

Really, it was just a way to measure the difference in processing power of the machines. I’m not the most technically minded person around, so someone else could perhaps explain bits better than I. But the point is that we didn’t really know or even care what bits were, it just meant the game looked better. But we certainly held our heads high if we had a 16-bit machine while someone else had an 8-bit one, even if we really had no idea what it all meant.

The bits thing carried onto the fifth generation of gaming too, it was now the 32-bit age. The Atari Jaguar, 3DO, Sega Saturn and of course, the PlayStation. Some machines even liked to boast their bits in their names, Amiga CD32 and Sega’s Sega 32X add-on both have 32 right there in their names. While Nintendo decided to one-up everyone else and release the Nintendo 64. 64-bits in the 32-bit era? I suppose that technically, the N64 was 64-bit… using some clever 32-bit architecture. Again, I’m not massively tech-savvy, so someone else can explain how and why the N64 was both a 64 and 32-bit machine at the same time.

Then we moved on to the next and sixth generation of gaming. This is where the bits thing began to disappear. The whole idea of calling each successive age of gaming a ‘generation’ really came alive around now. Even though we were now in a 64-bit age, it just never really got used that much. Even the machines themselves dropped the idea of using the bits in their names too. The Dreamcast, PlayStation 2, GameCube and Xbox did away with 64-bits and were just named or named as sequel machines. We were still in the 64-bit era of gaming, but no one really called it that, it was just the new generation of gaming. Bits were dying out.

Of course, this all brings us up to date in terms of bits, the bits thing is just not really used anymore. We are now in the ninth generation of gaming. The seventh generation, the PlayStation 3, Xbox 360 and Wii era would’ve been the 128-bit age. The eighth, PlayStation 4, Xbox One and Wii U/Switch consoles would be the 256-bit era. Then, of course, the now current and ninth PlayStation 5, Xbox Series X/S generation would be the 512-bit age of gaming. Yet, we never use bits anymore, do we? I’ve never heard anyone refer to their PlayStation 5 as a 512-bit machine as we used to a while back.

It’s just strange to me that at the time, we never really used bits to describe our consoles. Nobody back in the 80s said the NES was an 8-bit machine, it was just the NES. We never called the Mega Drive a 16-bit console… even though it had 16-bit right on its front. We may have used bits to sound like we knew what we were talking about on a technical level, when we really didn’t. But nobody ever said “I’m going home to play my 16-bit console”, it was just the Mega Drive or the SNES. Basically, bits were and still are a load of old bollocks. A buzz phrase used now, retroactively to separate the early generations of gaming… but not the earliest or the latter ones. There just seems to be the 8-bit and 16-bit eras and then it becomes the PlayStation and beyond eras… sometimes very occasionally called the 32-bit era. A lot of youngsters don’t even know there was gaming before the 8-bit era because of the focus that the 8 and 16-bit years, while those earlier consoles are forgotten. Or they get bundled in with the 8-bit consoles when they were not 8-bit at all.

Bring back bits I say and let’s use it from the 2-bit consoles right through to the modern-day just for consistency’s sake. Instead of just the 8, 16 and sometimes, the 32-bit years. Now if you’ll excuse me, I have to review some indie 8 and 16-bit style games on my 512-bit console.

Leave a reply to Joshua Thompson Cancel reply